Use LLM Response Streaming for Voice Interactions

To provide a more natural and fluid experience in voice-based conversations, you can use the LlmStreamer internal integration. This allows the AI Agent to stream the model output in real-time rather than waiting for the full generation to complete.

NOTE: The LLM Response Streaming feature for voice interactions was introduced as a technological preview in version 9.11 and is generally available (GA) starting with version 9.19.

Prerequisite

- Before you start, make sure you have an LLM resource set up in the Druid Portal. For more information, see Create LLM Resources.

Add LLM Streaming to a Run Agent Step

Follow these steps to enable LLM response streaming in your agentic flow:

- Open your agentic flow and click the Run Agent step.

- Scroll to the Post Actions section.

- Remove the existing LLM integration (if any configured).

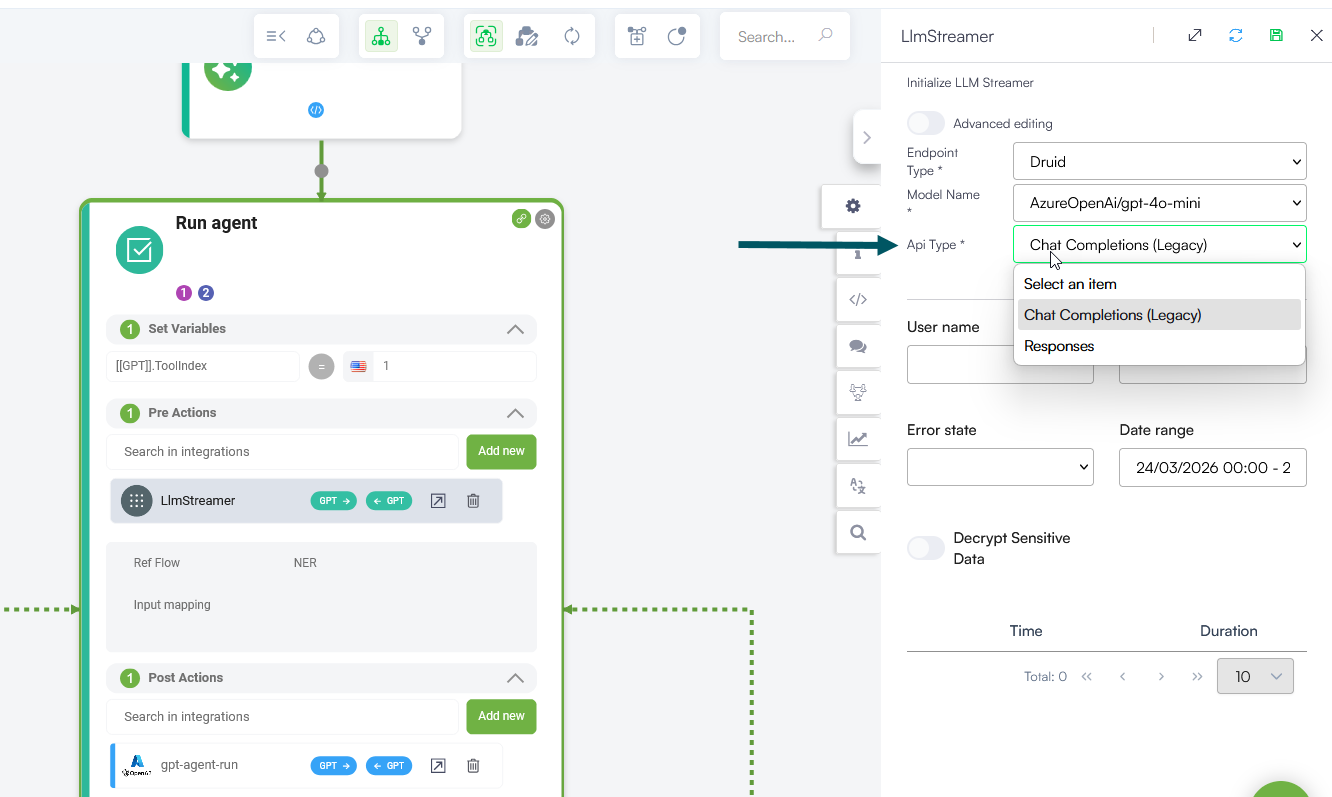

- Add the LlmStreamer internal action to your flow step.

- Click on the internal action and configure it as follows:

- Endpoint Type: Select the provider (e.g., Druid).

- Model Name: Choose the specific model you wish to use from the dropdown.

- Api Type: This field appears dynamically in Druid 9.19 and higher when an Azure OpenAI model is selected (e.g., AzureOpenAi/gpt-4o-mini). Select one of the following:

- Responses Recommended for Azure OpenAI models. This is the preferred API for Azure OpenAI models, enabling better performance and more intelligent interactions.

- Chat Completions (Legacy): Use this for backward compatibility with existing configurations. Note that this endpoint is now considered legacy.

- Save the step and publish your flow.

Info: For other providers, the platform defaults to the standard completion settings compatible with those models.

Once enabled, your voice interactions start returning partial responses as they are generated. This helps your AI Agent react faster and makes conversations feel more fluid and natural.